Recently, there has been a lot of attention on artificial intelligence (AI). This has naturally led our nerdy minds 🤓 to discuss the potential of AI in collective intelligence. In this article, we will provide a brief overview of the current state of the field before delving into our main topic of interest: using AI for collective intelligence and creative problem solving.

Edit: Since this article has been published, we have developed seven powerful AI features and tested them with our community of facilitators. Now it's time to up your game! Alexandre has also analyzed the shortcomings of an AI-powered clustering feature and presented a new and improved process.

No time to read the full article?

- Some of the most notable trends in the field of AI include (among others): Deep learning, Large Language Models, Robotics, Computer vision, Generative Art, Explainable AI and Edge computing

- While AI systems can gather large amounts of data and perform tasks based on that data, they do not truly understand the concepts they are processing and may not be able to generate innovative or subversive ideas on workshop topics.

- To use AI for collective intelligence, it may be helpful to explore features such as summarizing and thematizing content, analyzing reactions, and prototyping.

- As with any new technology, it is important to understand the limitations and potential dangers of AI in order to clearly envision its potential impacts.

- In our view, AI should be seen as a tool to enhance human intelligence and use its capabilities to alleviate group biases.

What is AI?

Artificial intelligence (AI) is a field of computer science and engineering that aims to create intelligent programs, or "machines," that can perform tasks that typically require human intelligence, such as learning, problem-solving, decision-making, and perception.

Current trends

Some notable trends in AI include:

- Deep learning: This is a type of machine learning that involves the use of artificial neural networks to learn and make decisions based on data. It is expected to play a key role in the development of many AI applications.

- Robotics: AI is used to develop advanced robots that can perform tasks in a variety of environments, including manufacturing, healthcare, and service industries. AI tools can also provide predictive maintenance, allowing us to know in advance when machines will need servicing or repair.

- Computer vision: This is the ability of AI systems to analyze and understand visual data from the world around them. Computer vision is used in autonomous vehicles, image recognition, and augmented reality.

- Generative art: This enables automated software to create unique images and artwork from simple text prompts.

- Large language models (LLMs) and natural language processing (NLP): NLP is a branch of AI that focuses on the development of systems that can understand, interpret, and generate human language. NLP is used in chatbots, language translation systems, and voice recognition systems.

Be among the first to use Artificial Intelligence for brainstorming and workshop facilitation!

Facilitators are you worried you have missed the AI bus? Not to worry. Today you catch-up…

Implementations

Thanks to the work on these different approaches of AI, varied implementations have been blooming for the public through smart assistants (Alexa, Google’s Assistant), autonomous vehicles, home automation systems (lighting, heating etc.), chatbots on apps and websites, language translation systems or image and facial recognition systems.

Recently, there has been a lot of attention on AI due to the release of ChatGPT and the announcement of GPT-3, a Large Language Model (LLM) developed by OpenAI. ChatGPT3 is a type of AI-powered software that can generate human-like text and can be used for natural language processing tasks such as language translation, summarization, and question answering. Essentially, it acts like a chatbot: you type a question or task in a field, and it responds by performing language processing tasks.

How does it relate to collective intelligence and creative problem solving?

Now that we have provide a brief overview of the current state of the field, we can start discussing our main topic of interest: using AI for collective intelligence and creative problem solving. But did we want to discuss this new development?

This emergence of AI reminds us of the introduction of technology in workshop facilitation about 10 years ago. When Stormz appeared in 2012, some facilitators were curious while others feared that technology might "take their job" or distract participants and decrease the quality of discussions during workshops. Over time, more and more facilitators have recognised the advantages of using technology and some have eventually begun to adopt it (others have not, and we understand perfectly). In the case of the emergence of AI as with any other technology, it is up to all of us as practitioners to disseminate knowledge about these new technologies to determine what could improve or hinder the dynamics of our workshops and what tools are useful or not.

Therefore, perhaps the question we should ask ourselves is: How can AI be used to enhance collective intelligence during workshops? Would its adoption be relevant?

Why AI will not (yet) find creative solutions to your problems

Let's start with some thoughts that will reassure you about the sustainability of collaborative workshops and the usefulness of human intelligence:

AI cannot innovate

It is important to understand that AI does not truly understand the concepts it gathers. As Louis Rosenberg explains: "I entered a text prompt asking for artwork depicting a robot holding a paintbrush, but the software has no understanding of what a 'robot' or a 'paintbrush' is. It created the artwork using a statistical process that correlates imagery with the words and phrases in the prompt.". If you have some time, here is a video (in french, you can use captions) that explains the functioning of ChatGPT and that also explains why the answers of the BOT are not supposed to be correct/true but simply chain the most statistically probable words, one after the other:

So AI cannot innovate

It is important to note that AI is not a single entity and does not have a collective will or consciousness of its own. AI systems are designed and developed by humans, and they perform tasks and make decisions based on the algorithms and data that have been programmed into them.

« It is important to remember that generative AI systems are not creative. In fact, they’re not even intelligent. »

In an interview on French radio France Inter, Luc Julia, IT engineer and Scientific Director of the Renault group and co-creator of the vocal assistant Siri, agreed that there is an acceleration in what he calls "generative" AI, but he also emphasized that these machines do not create or innovate - they simply copy and rearrange existing material (he uses the term "re-chew").

Artists may paint in the style of another artist, but when genius artists innovate, they bring something new and unseen before. AI machines cannot innovate in this way because they must source from already existing material. Innovation may occur in how humans use prompts, but it is important to note that AI intelligence has often been trained with existing, sometimes outdated data (for example, GPT3 uses information from 2021). This is what leads Rosenberg to say that when something is created by AI, humanity is the creator: "My view is that we all created that artwork - humanity itself. Yes, the collective we call humanity is the artist."

« The collective we call Humanity is the artist. »

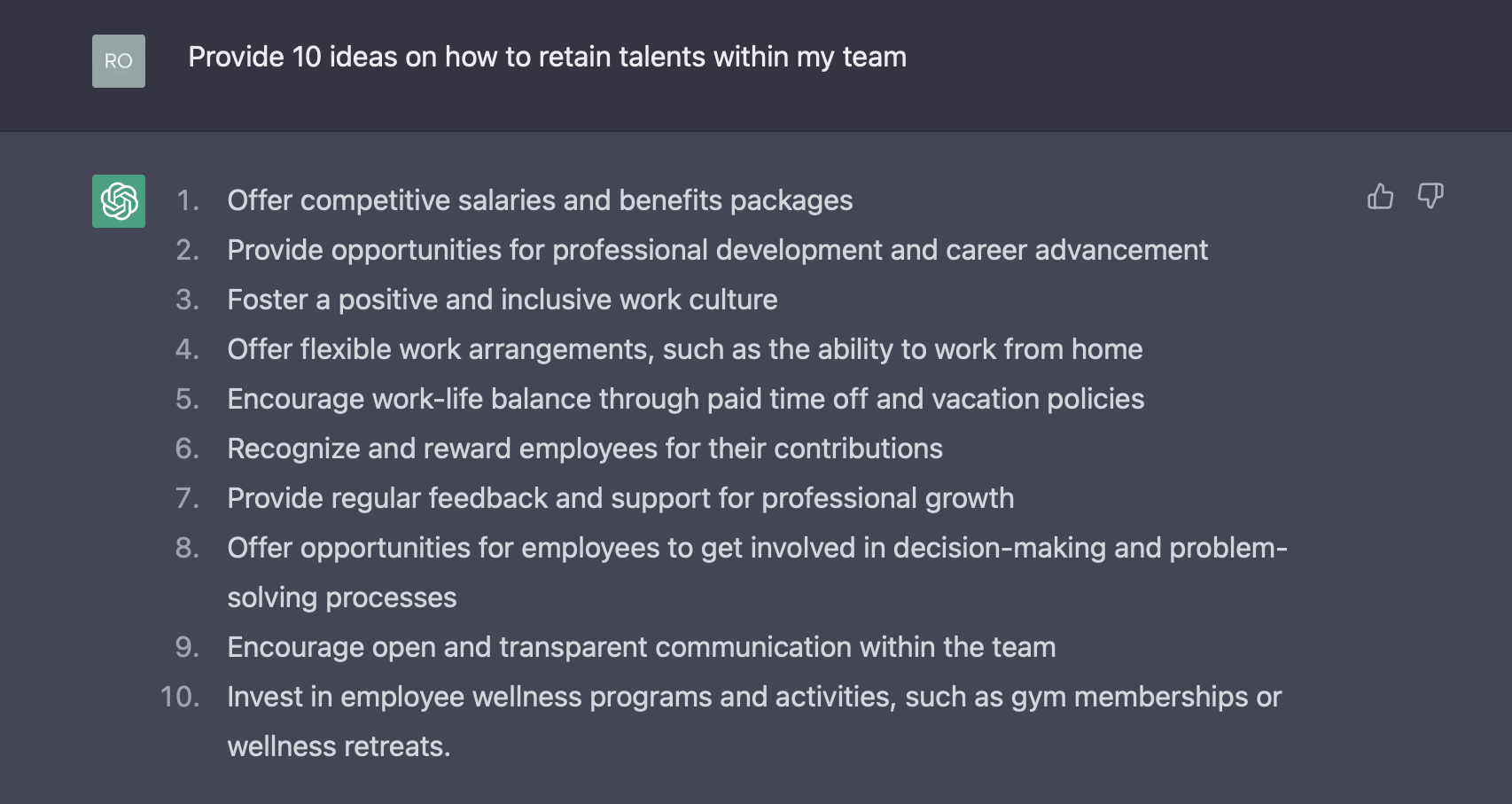

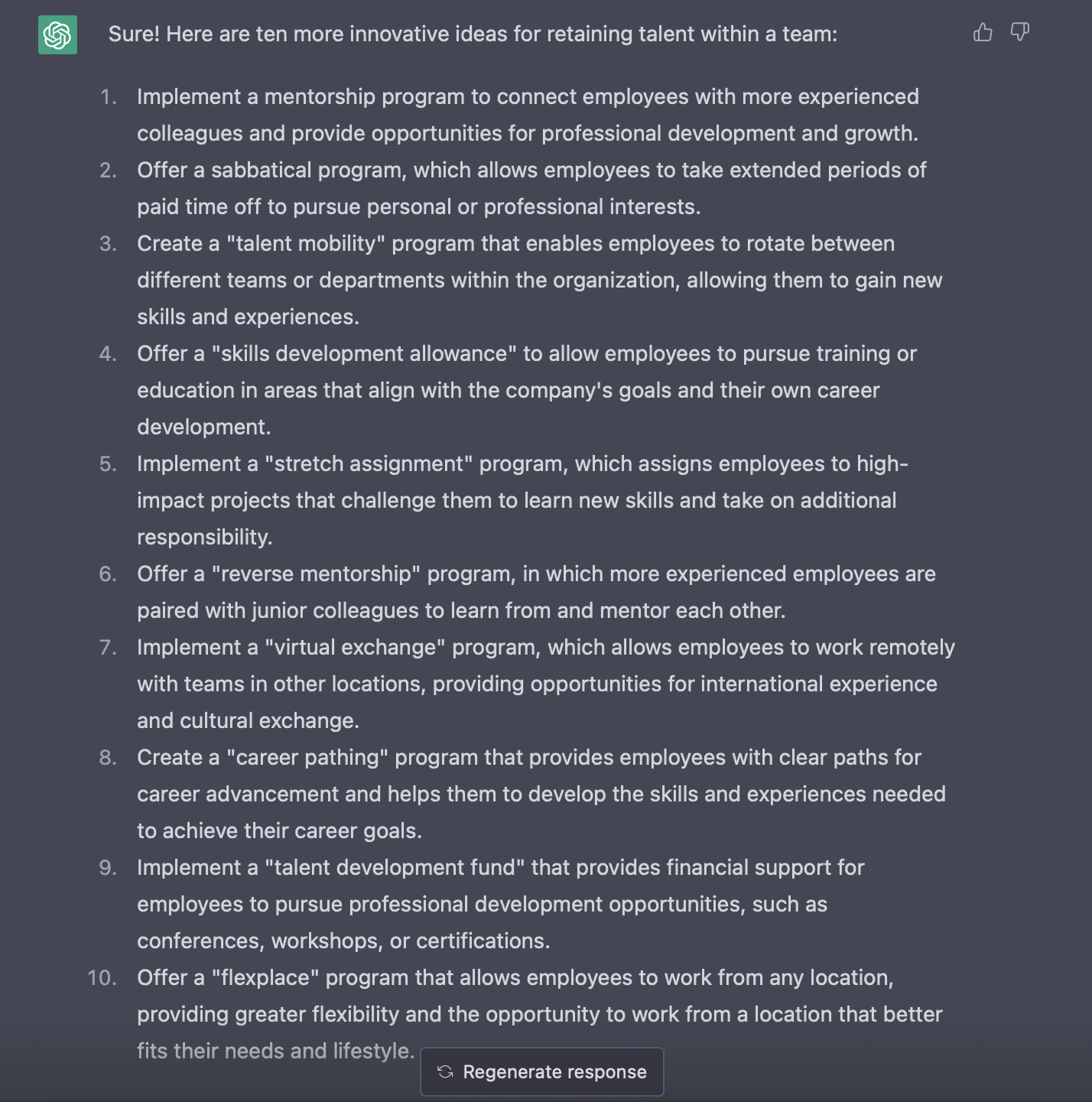

For example, if you ask AI to provide ideas on a pretty “universal” theme, like how to retain talents within your team, it can gather a list of ideas. And as you can see, these are sound ideas… but commonplace:

According to his theory in “Think Better” (2007), Tim Hurson would qualify these as “First third” ideas as they are “mundane, every-one-has-thought-of-them-before ideas. These are the early thoughts that lie very close to the surface of our consciousness. They tend not to be new ideas at all but recollections of old ideas we’ve heard elsewhere. They are essentially reproductive thoughts.”

If you want to go further and ask the AI to challenge these ideas to make them more innovative, you can get “Second third” ideas:

It would be ideas “that begin to stretch boundaries … more than simple regurgitations of what we’ve heard or thought before” (Tim Hurson, 2007). As you can see, the AI could be a great help for divergence, but they are not creative ideas that we have never heard of (“Third third” ideas) because that would need an extra input that AI doesn’t have – imagination.

Conclusion: AI machines like LLMs don't try to answer the questions asked but to predict the most probable next set of words to answer the query. The machine does not know the jobs or skills related to the challenge/question and does not understand the situation and the stakes of the company. So when asking for ideas from this type of AI, the generated ideas will make sense and will likely be commonplace, not innovative ideas.

Ideas and exploration paths to use AI in collaborative workshops

But let's put the limitations mentioned above into perspective by being honest: radical innovation is, in any case, NOT the goal of many brainstorming sessions. Sometimes the discussions, shared viewpoints and the collective energy built during workshops are just as important as finding ideas.

If we accept that an AI machine will not help our workshops by generating innovative and creative ideas that you can (sometimes) get during a creative brainstorming, then how can we make this technology useful? If we focus on generative art, here are some potential uses for AI in workshop facilitation to consider:

- Prototyping: If participants write prompts describing their new idea for a product, service, etc., an AI could provide a first draft of it.

- Inspiring content: Create a list of images of words for forced connections

Of course, as the output of many collaborative workshops relies on written content, LLMs could also be useful for:

- Summarising: AI could be useful for summarizing a large amount of data created by participants. This may be less biased than a consultant quickly scanning a large number of post-it notes.

- Thematising: Using statistical features, the AI could sort and gather similar ideas into main themes or categories. Use it wisely, Alexandre discuss the shortcomings and a solution to AI-powered clustering.

- Reaction analysis: Based on the words used in the content produced, the AI could determine the proportion of participants in favour of or against an idea.

- Designing sessions: Create a draft of agenda for your session with a challenge given as a prompt

- Challenge storming: Starting from a given challenge, suggest alternative formulations

- Proofreading: Improve the wording of ideas quickly written by participants

- Providing “first third” ideas: Generate seeds of ideas as conversation starters for your participants

As with any new technology, it is important to understand the limitations and potential dangers of AI to clearly envision its potential impacts. Our current thinking is that, like any other tool, AI can be used in detrimental or positive ways. We should therefore try to continue learning about it and consider its use to improve the quality of workshop outputs, foster better conversations and use its capabilities to mitigate group biases.

And you, do you think we should rely on AI for collaborative workshops?

Facilitators are you worried you have missed the AI bus? Not to worry. Today you catch-up…

Sources

Rosenberg L. (2022) ‘I used generative AI to create pictures of painting robots, but I’m not the artist — humanity is’, bigthink.com https://bigthink.com/high-culture/generative-ai-pictures-humanity-artist/

Marr B. (2022) ‘The 7 Biggest Artificial Intelligence (AI) Trends In 2022’, Forbes.com https://www.forbes.com/sites/bernardmarr/2021/09/24/the-7-biggest-artificial-intelligence-ai-trends-in-2022/

Interview of Luc Julia. (2022) ‘Le 7/9.30 du jeudi 29 décembre 2022’, FranceInter https://www.radiofrance.fr/franceinter/podcasts/le-7-9-30/le-7-9-30-du-jeudi-29-decembre-2022-2994151

Brennan C. (2020) ‘The internet is not ready for the flood of AI-generated text’, mondaynote.com https://mondaynote.com/the-internet-is-not-ready-for-the-flood-of-ai-generated-text-a082976c6186

Arnold K. (2022) ‘The third third of brainstorming’, extraordinaryteam.com https://extraordinaryteam.com/the-third-third-of-brainstorming/

Quotes from Think better by Tim Hurson Hurson, T. (2008) ‘Think Better,’ New York: McGraw-Hill.

Amer, M. (2022) ‘Large Language Models and Where to Use Them: Part 1’, txt.cohere.ai https://txt.cohere.ai/llm-use-cases/